搜索到

368

篇与

的结果

-

最简单的 rtmp 推流服务器搭建方法 一开始想到要弄一个简单的 rtmp 服务器是为了给同学上课投射屏幕用。因为我用的是 Linux ,没法用国产的那些课室软件给他们投放屏幕,于是只好出此下策了。我使用的系统是 CentOS 7 和 Ubuntu 16.04 ,所以就想到最简单的方式搭建:使用现成的 Docker 镜像。因为重新编译安装 nginx 对我来说不太现实,会直接影响到我的开发环境。先安装好 dockerCentOS 7 :sudo yum install dockerUbuntu 16.04 :sudo apt-get install docker.io安装好之后执行 systemctl status docker 查看一下 docker 有没有被启动,没有的话执行 sudo systemctl start docker 启动。如果想日后自动启动 docker ,可以执行 sudo systemctl enable docker。docker 需要使用 root 权限来操作,如果嫌麻烦可以把自己加入 docker 的用户组里,或者直接 su root 。这里我直接使用 tiangolo/nginx-rtmp 来搭建 rtmp 服务器。sudo docker pull tiangolo/nginx-rtmp等下载完成之后就可以启动这个镜像sudo docker run -d -p 1935:1935 --name nginx-rtmp tiangolo/nginx-rtmp然后就可以直接使用 OBS 推流了。在推流的地址上填写 rtmp://你电脑的 ip 地址/live,密钥随便填写。然后可以开始串流了。在可以看串流的客户端上(例如 vlc )打开网络串流,地址就是 rtmp://你电脑的 ip 地址/live/你的密钥。因为 CentOS 和 Ubuntu 都有防火墙,如果没法推流或者接收推流的话,有可能是因为防火墙的问题。这时最好让防火墙打开 1935 端口的访问,或者直接关掉防火墙(一般是叫做 firewall 的服务或者 ufirewall )。参考资料大概是最简单的 rtmp 推流服务器搭建方法:https://zhuanlan.zhihu.com/p/52631225

最简单的 rtmp 推流服务器搭建方法 一开始想到要弄一个简单的 rtmp 服务器是为了给同学上课投射屏幕用。因为我用的是 Linux ,没法用国产的那些课室软件给他们投放屏幕,于是只好出此下策了。我使用的系统是 CentOS 7 和 Ubuntu 16.04 ,所以就想到最简单的方式搭建:使用现成的 Docker 镜像。因为重新编译安装 nginx 对我来说不太现实,会直接影响到我的开发环境。先安装好 dockerCentOS 7 :sudo yum install dockerUbuntu 16.04 :sudo apt-get install docker.io安装好之后执行 systemctl status docker 查看一下 docker 有没有被启动,没有的话执行 sudo systemctl start docker 启动。如果想日后自动启动 docker ,可以执行 sudo systemctl enable docker。docker 需要使用 root 权限来操作,如果嫌麻烦可以把自己加入 docker 的用户组里,或者直接 su root 。这里我直接使用 tiangolo/nginx-rtmp 来搭建 rtmp 服务器。sudo docker pull tiangolo/nginx-rtmp等下载完成之后就可以启动这个镜像sudo docker run -d -p 1935:1935 --name nginx-rtmp tiangolo/nginx-rtmp然后就可以直接使用 OBS 推流了。在推流的地址上填写 rtmp://你电脑的 ip 地址/live,密钥随便填写。然后可以开始串流了。在可以看串流的客户端上(例如 vlc )打开网络串流,地址就是 rtmp://你电脑的 ip 地址/live/你的密钥。因为 CentOS 和 Ubuntu 都有防火墙,如果没法推流或者接收推流的话,有可能是因为防火墙的问题。这时最好让防火墙打开 1935 端口的访问,或者直接关掉防火墙(一般是叫做 firewall 的服务或者 ufirewall )。参考资料大概是最简单的 rtmp 推流服务器搭建方法:https://zhuanlan.zhihu.com/p/52631225 -

快速调用Yolov5模型检检测图片 前提:未修改模型结构1.快速调用官方的Yolov5预模型import torch # 使用torch.hub加载yolov5的预训练模型训练 model = torch.hub.load('ultralytics/yolov5', 'yolov5s') # or yolov5m, yolov5x, custom # 进行模型调用测试 img_path = './6800.jpg' # or file, PIL, OpenCV, numpy, multiple results = model(img_path) # 得到预测结果 print(results.xyxy) # 输出预测出的bbox_list results.show() # 预测结果展示2.快速调用自己训练好的的Yolov5预模型(有pt文件即可)import torch # 使用torch.hub加载yolov5的预训练模型训练 model = torch.hub.load('ultralytics/yolov5', 'yolov5s') # or yolov5m, yolov5x, custom # 加载自己训练好的模型及相关参数 cpkt = torch.load("./best.pt",map_location=torch.device("cuda:0")) # 将预训练的模型的骨干替换成自己训练好的 yolov5_load = model yolov5_load.model = cpkt["model"] # 进行模型调用测试 img_path = './6800.jpg' # or file, PIL, OpenCV, numpy, multiple results = yolov5_load(img_path) # 得到预测结果 print(results.xyxy) # 输出预测出的bbox_list results.show() # 预测结果展示参考资料https://github.com/ultralytics/yolov5

快速调用Yolov5模型检检测图片 前提:未修改模型结构1.快速调用官方的Yolov5预模型import torch # 使用torch.hub加载yolov5的预训练模型训练 model = torch.hub.load('ultralytics/yolov5', 'yolov5s') # or yolov5m, yolov5x, custom # 进行模型调用测试 img_path = './6800.jpg' # or file, PIL, OpenCV, numpy, multiple results = model(img_path) # 得到预测结果 print(results.xyxy) # 输出预测出的bbox_list results.show() # 预测结果展示2.快速调用自己训练好的的Yolov5预模型(有pt文件即可)import torch # 使用torch.hub加载yolov5的预训练模型训练 model = torch.hub.load('ultralytics/yolov5', 'yolov5s') # or yolov5m, yolov5x, custom # 加载自己训练好的模型及相关参数 cpkt = torch.load("./best.pt",map_location=torch.device("cuda:0")) # 将预训练的模型的骨干替换成自己训练好的 yolov5_load = model yolov5_load.model = cpkt["model"] # 进行模型调用测试 img_path = './6800.jpg' # or file, PIL, OpenCV, numpy, multiple results = yolov5_load(img_path) # 得到预测结果 print(results.xyxy) # 输出预测出的bbox_list results.show() # 预测结果展示参考资料https://github.com/ultralytics/yolov5 -

js replace全部替换的方法 js replace全部替换的方法replace替换第一次出现的字符串 var str = '我在中国北方纯正的中国北方人'; var newstr=str.replace('北方','南方'); console.log(newstr); //我在中国南方纯正的中国南方人使用正则替换字符串中匹配的所有字符串(实现replaceAll效果) var str = '我在中国北方纯正的中国北方人'; var reg = new RegExp( '北方' , "g" ) var newstr = str.replace( reg , '南方' ); console.log(newstr); //我在中国南方纯正的中国南方人封装成replaceAll挂载到原型链String.prototype.replaceAll=function(a,b){//吧a替换成b var reg=new RegExp(a,"g"); //创建正则RegExp对象 return this.replace(reg,b); } //实例 var str = '我在中国北方纯正的中国北方人'; var newstr=str.replaceAll('北方','南方'); console.log(newstr); //我在中国南方纯正的中国南方人参考资料js replace全部替换的方法:https://blog.csdn.net/nizhengjia888/article/details/84143650

js replace全部替换的方法 js replace全部替换的方法replace替换第一次出现的字符串 var str = '我在中国北方纯正的中国北方人'; var newstr=str.replace('北方','南方'); console.log(newstr); //我在中国南方纯正的中国南方人使用正则替换字符串中匹配的所有字符串(实现replaceAll效果) var str = '我在中国北方纯正的中国北方人'; var reg = new RegExp( '北方' , "g" ) var newstr = str.replace( reg , '南方' ); console.log(newstr); //我在中国南方纯正的中国南方人封装成replaceAll挂载到原型链String.prototype.replaceAll=function(a,b){//吧a替换成b var reg=new RegExp(a,"g"); //创建正则RegExp对象 return this.replace(reg,b); } //实例 var str = '我在中国北方纯正的中国北方人'; var newstr=str.replaceAll('北方','南方'); console.log(newstr); //我在中国南方纯正的中国南方人参考资料js replace全部替换的方法:https://blog.csdn.net/nizhengjia888/article/details/84143650 -

linux iperf 局域网测速 linux iperf 局域网测速iperf 百科描述Iperf 是一个网络性能测试工具。Iperf可以测试最大TCP和UDP带宽性能,具有多种参数和UDP特性,可以根据需要调整,可以报告带宽、延迟抖动和数据包丢失iperf使用1.服务端启动服务,作为server:sudo apt-get install iperf iperf -s -i 2 # 每两秒间隔输出测试结果2.客户端启动服务,作为client:sudo apt-get install iperf iperf -c <server_IP> -t 10iperf 结果分析每两秒输出的结果 Transfer是数据量,Bandwidth是这些数据量的传输时的速度Server listening on TCP port 5001 TCP window size: 128 KByte (default) ------------------------------------------------------------ [ 4] local 192.168.0.120 port 5001 connected with 192.168.0.233 port 56144 [ ID] Interval Transfer Bandwidth [ 4] 0.0- 2.0 sec 22.3 MBytes 93.7 Mbits/sec [ 4] 2.0- 4.0 sec 22.4 MBytes 94.1 Mbits/sec [ 4] 4.0- 6.0 sec 22.4 MBytes 94.2 Mbits/sec [ 4] 6.0- 8.0 sec 22.4 MBytes 94.1 Mbits/sec [ 4] 8.0-10.0 sec 22.4 MBytes 94.1 Mbits/sec [ 4] 0.0-10.0 sec 113 MBytes 94.1 Mbits/sec参考资料1.arm linux iperf 局域网测速:https://blog.csdn.net/shenhuxi_yu/article/details/111612609

linux iperf 局域网测速 linux iperf 局域网测速iperf 百科描述Iperf 是一个网络性能测试工具。Iperf可以测试最大TCP和UDP带宽性能,具有多种参数和UDP特性,可以根据需要调整,可以报告带宽、延迟抖动和数据包丢失iperf使用1.服务端启动服务,作为server:sudo apt-get install iperf iperf -s -i 2 # 每两秒间隔输出测试结果2.客户端启动服务,作为client:sudo apt-get install iperf iperf -c <server_IP> -t 10iperf 结果分析每两秒输出的结果 Transfer是数据量,Bandwidth是这些数据量的传输时的速度Server listening on TCP port 5001 TCP window size: 128 KByte (default) ------------------------------------------------------------ [ 4] local 192.168.0.120 port 5001 connected with 192.168.0.233 port 56144 [ ID] Interval Transfer Bandwidth [ 4] 0.0- 2.0 sec 22.3 MBytes 93.7 Mbits/sec [ 4] 2.0- 4.0 sec 22.4 MBytes 94.1 Mbits/sec [ 4] 4.0- 6.0 sec 22.4 MBytes 94.2 Mbits/sec [ 4] 6.0- 8.0 sec 22.4 MBytes 94.1 Mbits/sec [ 4] 8.0-10.0 sec 22.4 MBytes 94.1 Mbits/sec [ 4] 0.0-10.0 sec 113 MBytes 94.1 Mbits/sec参考资料1.arm linux iperf 局域网测速:https://blog.csdn.net/shenhuxi_yu/article/details/111612609 -

YOLOv5项目目录结构 YOLOv5项目目录结构| detect.py #检测脚本 | hubconf.py #PyTorch Hub相关代码 | LICENSE #版权文件 | README.md #README markdown文件 | requirements.txt #项目所需的安装包列表 | sotabench.py #COCO数据集测试脚本 | test.py #模型测试脚本 | train.py #模型训练脚本 | tutorial.ipynb #Jupyter Notebook演示代码 |---data | | coco.yaml #COCO数据集配置文件 | | coco128.yaml #COCO128数据集配置文件 | | hyp.finetune.yaml #超参数微调配置文件 | | hyp.scratch.yaml #超参数起始配置文件 | | voc.yaml #VOC数据集配置文件 | |---scripts | | | get_coco.sh #下载COCO数据集shell命令 | | | get_voc.sh #下载VOC数据集shell命令 |---inference | |---images #示例图片文件夹 | | | bus.jpg | | | zidane.jpg |---models | | common.py #模型组件定义代码 | | experimental.py #实验性质的代码 | | export.py #模型导出脚本 | | yolo.py #Detect及Model构建代码 | | yolov5l.yaml #yolov51网络模型配置文件 | | yolov5m.yaml #yolov5m网络模型配置文件 | | yolov5s.yaml #yolov5s网络模型配置文件 | | yolov5x.yaml #yolov5x网络模型配置文件 | | __init__.py | |---hub | | | yolov3-spp.yaml | | | yolov5-fpn.yaml | | | yolov5-panet.yaml |---runs #训练结果 | |---exp0 | | | events.out.tfevents.1604835533.PC-201807230204.26148.0 | | | hyp.yaml | | | labels.png | | | opt.yaml | | | orecision-recall_curve.png | | | results.png | | | results.txt | | | test_batch0_gt.jpg | | | test_batch0_pred.jpg | | | train_batch0.jpg | | | train_batch1.jpg | | | train_batch2.jpg | | |---weights | | | | best.pt #最好权重 | | | | last.pt #最近权重 |---utils | | activations.py #激活函数定义代码 | | datasets.py #Dataset及Dataloader定义代码 | | evolve.sh #超参数进化命令 | | general.py #项目通用函数代码 | | google_utils.py #谷歌云使用相关代码 | | torch_utils.py #辅助程序代码 | | __init_.py | |---google_app_engine | | | additional_requirements.txt | | | app.yaml | | | Dockerfile |---VOC #数据集目录 | |---images #数据集图片目录 | | |---train #训练集图片文件夹 | | | | 1000005.jpg | | | | 000007.jpg | | | | 000009.jpg | | | | 000012.jpg | | | | 000016.jpg | | | | ...... | | |---val #验证集图片文件夹 | | | | 000001.jpg | | | | 000002.jpg | | | | 000003.jpg | | | | 000004.jpg | | | | 000006.jpg | | | | ...... | |---labels #数据集标签目录 | | | train.cache | | | val.cache | | |---train #训练集标签文件夹 | | | | 000005.txt | | | | 000007.txt | | | | 000009.txt | | | | 000012.txt | | | | 000016.txt | | | | ...... | | |---val #测试集标签文件夹 | | | | 000001.txt | | | | 000002.txt | | | | 000003.txt | | | | 000004.txt | | | | 000006.txt | | | | ...... |---weights | | download weights.sh #下载权重文件命令 | | yolov5l.pt #yolov5l权重文件 | | yolov5m.pt #yolov5m权重文件 | | yolov5s.mlmodel #yolov5s权重文件(Core ML格式) | | yolov5s.onnx #yolov5s权重文件(onnx格式) | | yolov5s.pt #yolov5s权重文件 | | yolov5s.torchscript.pt #yolov5s权重文件(torchscript格式) | | yolov5x.pt #yolov5x权重文件参考资料1.https://www.bilibili.com/video/BV19K4y197u8?p=14

YOLOv5项目目录结构 YOLOv5项目目录结构| detect.py #检测脚本 | hubconf.py #PyTorch Hub相关代码 | LICENSE #版权文件 | README.md #README markdown文件 | requirements.txt #项目所需的安装包列表 | sotabench.py #COCO数据集测试脚本 | test.py #模型测试脚本 | train.py #模型训练脚本 | tutorial.ipynb #Jupyter Notebook演示代码 |---data | | coco.yaml #COCO数据集配置文件 | | coco128.yaml #COCO128数据集配置文件 | | hyp.finetune.yaml #超参数微调配置文件 | | hyp.scratch.yaml #超参数起始配置文件 | | voc.yaml #VOC数据集配置文件 | |---scripts | | | get_coco.sh #下载COCO数据集shell命令 | | | get_voc.sh #下载VOC数据集shell命令 |---inference | |---images #示例图片文件夹 | | | bus.jpg | | | zidane.jpg |---models | | common.py #模型组件定义代码 | | experimental.py #实验性质的代码 | | export.py #模型导出脚本 | | yolo.py #Detect及Model构建代码 | | yolov5l.yaml #yolov51网络模型配置文件 | | yolov5m.yaml #yolov5m网络模型配置文件 | | yolov5s.yaml #yolov5s网络模型配置文件 | | yolov5x.yaml #yolov5x网络模型配置文件 | | __init__.py | |---hub | | | yolov3-spp.yaml | | | yolov5-fpn.yaml | | | yolov5-panet.yaml |---runs #训练结果 | |---exp0 | | | events.out.tfevents.1604835533.PC-201807230204.26148.0 | | | hyp.yaml | | | labels.png | | | opt.yaml | | | orecision-recall_curve.png | | | results.png | | | results.txt | | | test_batch0_gt.jpg | | | test_batch0_pred.jpg | | | train_batch0.jpg | | | train_batch1.jpg | | | train_batch2.jpg | | |---weights | | | | best.pt #最好权重 | | | | last.pt #最近权重 |---utils | | activations.py #激活函数定义代码 | | datasets.py #Dataset及Dataloader定义代码 | | evolve.sh #超参数进化命令 | | general.py #项目通用函数代码 | | google_utils.py #谷歌云使用相关代码 | | torch_utils.py #辅助程序代码 | | __init_.py | |---google_app_engine | | | additional_requirements.txt | | | app.yaml | | | Dockerfile |---VOC #数据集目录 | |---images #数据集图片目录 | | |---train #训练集图片文件夹 | | | | 1000005.jpg | | | | 000007.jpg | | | | 000009.jpg | | | | 000012.jpg | | | | 000016.jpg | | | | ...... | | |---val #验证集图片文件夹 | | | | 000001.jpg | | | | 000002.jpg | | | | 000003.jpg | | | | 000004.jpg | | | | 000006.jpg | | | | ...... | |---labels #数据集标签目录 | | | train.cache | | | val.cache | | |---train #训练集标签文件夹 | | | | 000005.txt | | | | 000007.txt | | | | 000009.txt | | | | 000012.txt | | | | 000016.txt | | | | ...... | | |---val #测试集标签文件夹 | | | | 000001.txt | | | | 000002.txt | | | | 000003.txt | | | | 000004.txt | | | | 000006.txt | | | | ...... |---weights | | download weights.sh #下载权重文件命令 | | yolov5l.pt #yolov5l权重文件 | | yolov5m.pt #yolov5m权重文件 | | yolov5s.mlmodel #yolov5s权重文件(Core ML格式) | | yolov5s.onnx #yolov5s权重文件(onnx格式) | | yolov5s.pt #yolov5s权重文件 | | yolov5s.torchscript.pt #yolov5s权重文件(torchscript格式) | | yolov5x.pt #yolov5x权重文件参考资料1.https://www.bilibili.com/video/BV19K4y197u8?p=14 -

linux下配置远程免密登录 linux下配置远程免密登录ssh远程登录的身份验证方式ssh远程登录有两种身份验证:用户名+密码密钥验证机器1生成密钥对并将公钥发给机器2,机器2将公钥保存。机器1要登录机器2时,机器2生成随机字符串并用机器1的公钥加密后,发给机器1。机器1用私钥将其解密后发回给机器2,验证成功后登录1、用户名+密码机器1要登录到机器2ssh 机器2的ip(默认使用root用户登录,也可指定,如:ssh a@192.168.25.14 表示指定由a用户登录机器2)询问是否需要创建连接 yes输入机器2中root用户的密码即可登录到机器2输入exit回到机器12、远程免密登录输入命令ssh-keygen按三次回车,完成生成私钥和公钥到/root/.ssh目录下可看到刚刚那条命令生成的私钥和公钥输入ssh-copy-id 机器2的ip再输入机器2的密码,即可将公钥传给机器2机器2的/root/.ssh目录下的authorized_keys文件保存着刚才机器1传过来的公钥(可用cat命令查看,并对比机器1上的公钥,是一样的)机器1上直接输入ssh 机器2的ip即可登录机器2,不用再输密码,自此完成了远程免密登录的配置参考资料【图文详解】linux下配置远程免密登录:https://www.cnblogs.com/52mm/p/p5.html

linux下配置远程免密登录 linux下配置远程免密登录ssh远程登录的身份验证方式ssh远程登录有两种身份验证:用户名+密码密钥验证机器1生成密钥对并将公钥发给机器2,机器2将公钥保存。机器1要登录机器2时,机器2生成随机字符串并用机器1的公钥加密后,发给机器1。机器1用私钥将其解密后发回给机器2,验证成功后登录1、用户名+密码机器1要登录到机器2ssh 机器2的ip(默认使用root用户登录,也可指定,如:ssh a@192.168.25.14 表示指定由a用户登录机器2)询问是否需要创建连接 yes输入机器2中root用户的密码即可登录到机器2输入exit回到机器12、远程免密登录输入命令ssh-keygen按三次回车,完成生成私钥和公钥到/root/.ssh目录下可看到刚刚那条命令生成的私钥和公钥输入ssh-copy-id 机器2的ip再输入机器2的密码,即可将公钥传给机器2机器2的/root/.ssh目录下的authorized_keys文件保存着刚才机器1传过来的公钥(可用cat命令查看,并对比机器1上的公钥,是一样的)机器1上直接输入ssh 机器2的ip即可登录机器2,不用再输密码,自此完成了远程免密登录的配置参考资料【图文详解】linux下配置远程免密登录:https://www.cnblogs.com/52mm/p/p5.html -

Ubuntu搭建 Samba 服务步骤 1.安装 Samba 服务sudo apt install samba samba-common2.配置需要共享的目录# 新建目录,用于共享 sudo mkdir /usr/local/volumes # 更改权限信息 sudo chown nobody:nogroup /usr/local/volumes # 给所有用户添加读写权限 sudo chmod 777 /usr/local/volumes3.添加 Samba 用户添加 Samba 用户,用于在访问共享目录时使用。这里添加的用户在 Linux 中必须存在。sudo smbpasswd -a alan4.配置 Samba修改 /etc/samba/smb.conf,在最后面添加以下配置:[Volumes] comment = TimeCapsule Volumes path = /usr/local/volumes browseable = yes writable = yes available = yes valid users = alan5.重启 Samba 服务sudo service smbd restart参考资料Ubuntu 20.04 搭建 Samba 服务

Ubuntu搭建 Samba 服务步骤 1.安装 Samba 服务sudo apt install samba samba-common2.配置需要共享的目录# 新建目录,用于共享 sudo mkdir /usr/local/volumes # 更改权限信息 sudo chown nobody:nogroup /usr/local/volumes # 给所有用户添加读写权限 sudo chmod 777 /usr/local/volumes3.添加 Samba 用户添加 Samba 用户,用于在访问共享目录时使用。这里添加的用户在 Linux 中必须存在。sudo smbpasswd -a alan4.配置 Samba修改 /etc/samba/smb.conf,在最后面添加以下配置:[Volumes] comment = TimeCapsule Volumes path = /usr/local/volumes browseable = yes writable = yes available = yes valid users = alan5.重启 Samba 服务sudo service smbd restart参考资料Ubuntu 20.04 搭建 Samba 服务 -

ubuntu 安装CUDA忽略gcc版本 ubuntu 安装CUDA忽略gcc版本老铁们一定是这样操作的:$ sudo sh cuda_10.2.89_440.33.01_linux.run Failed to verify gcc version. See log at /var/log/cuda-installer.log for details.然后vim查看文件/var/log/cuda-installer.log说是GCC版本不兼容,要是想忽略这个问题,请使用--override参数于是乎就可以:sudo sh cuda_10.2.89_440.33.01_linux.run --override然后根据提示进行安装,最后的summary最重要的是这两句:Please make sure that - PATH includes /usr/local/cuda-10.2/bin - LD_LIBRARY_PATH includes /usr/local/cuda-10.2/lib64, or, add /usr/local/cuda-10.2/lib64 to /etc/ld.so.conf and run ldconfig as root就是要在路径中添加/usr/local/cuda-10.2/bin和/usr/local/cuda-10.2/lib64就是vim ~/.bashrc,在末尾添加:export PATH="/usr/local/cuda-10.2/bin:$PATH" export LD_LIBRARY_PATH="/usr/local/cuda-10.2/lib64:$LD_LIBRARY_PATH"That’ s all. 暂时由于GCC兼容的问题还没有遇到hhh参考资料Linux安装CUDA GCC版本不兼容:https://blog.csdn.net/HaoZiHuang/article/details/109544443

ubuntu 安装CUDA忽略gcc版本 ubuntu 安装CUDA忽略gcc版本老铁们一定是这样操作的:$ sudo sh cuda_10.2.89_440.33.01_linux.run Failed to verify gcc version. See log at /var/log/cuda-installer.log for details.然后vim查看文件/var/log/cuda-installer.log说是GCC版本不兼容,要是想忽略这个问题,请使用--override参数于是乎就可以:sudo sh cuda_10.2.89_440.33.01_linux.run --override然后根据提示进行安装,最后的summary最重要的是这两句:Please make sure that - PATH includes /usr/local/cuda-10.2/bin - LD_LIBRARY_PATH includes /usr/local/cuda-10.2/lib64, or, add /usr/local/cuda-10.2/lib64 to /etc/ld.so.conf and run ldconfig as root就是要在路径中添加/usr/local/cuda-10.2/bin和/usr/local/cuda-10.2/lib64就是vim ~/.bashrc,在末尾添加:export PATH="/usr/local/cuda-10.2/bin:$PATH" export LD_LIBRARY_PATH="/usr/local/cuda-10.2/lib64:$LD_LIBRARY_PATH"That’ s all. 暂时由于GCC兼容的问题还没有遇到hhh参考资料Linux安装CUDA GCC版本不兼容:https://blog.csdn.net/HaoZiHuang/article/details/109544443 -

ubuntu 安装 Realtek8813 系列无线网卡驱动 ubuntu 安装 Realtek8813 系列无线网卡驱动1.确认网卡型号$ lsblk Bus 001 Device 017: ID 0bda:8813 Realtek Semiconductor Corp.2.下载驱动This driver works ok: https://github.com/zebulon2/rtl8814au3.安装驱动git clone https://github.com/zebulon2/rtl8814au.git cd rtl8814au make sudo make install sudo modprobe 8814au参考资料Alfa AWUS1900 driver support:https://askubuntu.com/questions/981638/alfa-awus1900-driver-support

ubuntu 安装 Realtek8813 系列无线网卡驱动 ubuntu 安装 Realtek8813 系列无线网卡驱动1.确认网卡型号$ lsblk Bus 001 Device 017: ID 0bda:8813 Realtek Semiconductor Corp.2.下载驱动This driver works ok: https://github.com/zebulon2/rtl8814au3.安装驱动git clone https://github.com/zebulon2/rtl8814au.git cd rtl8814au make sudo make install sudo modprobe 8814au参考资料Alfa AWUS1900 driver support:https://askubuntu.com/questions/981638/alfa-awus1900-driver-support -

Ubuntu 16.04配置VNC进行远程桌面连接 1、安装sudo apt-get install xfce4 vnc4server xrdp 2、启动vncserver,初始化vncserver #启动vncserver,第一次需要输入设置登录密码如果密码忘记了,可以进去~/.vnc/目录删除password文件即可。3、修改配置文件xstartupvim ~/.vnc/xstartup在其中替换成如下的内容:#!/bin/sh # Uncomment the following two lines for normal desktop: # unset SESSION_MANAGER # exec /etc/X11/xinit/xinitrc #[ -x /etc/vnc/xstartup ] && exec /etc/vnc/xstartup #[ -r $HOME/.Xresources ] && xrdb $HOME/.Xresources #xsetroot -solid grey #vncconfig -iconic & #x-terminal-emulator -geometry 80x24+10+10 -ls -title "$VNCDESKTOP Desktop" & #x-window-manager & unset SESSION_MANAGER unset DBUS_SESSION_BUS_ADDRESS [ -x /etc/vnc/xstartup ] && exec /etc/vnc/xstartup [ -r $HOME/.Xresources ] && xrdb $HOME/.Xresources vncconfig -iconic & xfce4-session & 4、重新启动vncserver与xrdpsudo vncserver -kill :1 #杀死关闭vncserver vncserver #vncserver再次重启 sudo service xrdp restart #重新启动xrdp 5、连接参考资料Ubuntu 16.04配置VNC进行远程桌面连接(示例代码):https://www.136.la/nginx/show-36314.html

Ubuntu 16.04配置VNC进行远程桌面连接 1、安装sudo apt-get install xfce4 vnc4server xrdp 2、启动vncserver,初始化vncserver #启动vncserver,第一次需要输入设置登录密码如果密码忘记了,可以进去~/.vnc/目录删除password文件即可。3、修改配置文件xstartupvim ~/.vnc/xstartup在其中替换成如下的内容:#!/bin/sh # Uncomment the following two lines for normal desktop: # unset SESSION_MANAGER # exec /etc/X11/xinit/xinitrc #[ -x /etc/vnc/xstartup ] && exec /etc/vnc/xstartup #[ -r $HOME/.Xresources ] && xrdb $HOME/.Xresources #xsetroot -solid grey #vncconfig -iconic & #x-terminal-emulator -geometry 80x24+10+10 -ls -title "$VNCDESKTOP Desktop" & #x-window-manager & unset SESSION_MANAGER unset DBUS_SESSION_BUS_ADDRESS [ -x /etc/vnc/xstartup ] && exec /etc/vnc/xstartup [ -r $HOME/.Xresources ] && xrdb $HOME/.Xresources vncconfig -iconic & xfce4-session & 4、重新启动vncserver与xrdpsudo vncserver -kill :1 #杀死关闭vncserver vncserver #vncserver再次重启 sudo service xrdp restart #重新启动xrdp 5、连接参考资料Ubuntu 16.04配置VNC进行远程桌面连接(示例代码):https://www.136.la/nginx/show-36314.html -

Jetson nano 安装TensorFlow GPU Jetson nano 安装TensorFlow GPU1.Prerequisites and DependenciesBefore you install TensorFlow for Jetson, ensure you:Install JetPack on your Jetson device.Install system packages required by TensorFlow:$ sudo apt-get update $ sudo apt-get install libhdf5-serial-dev hdf5-tools libhdf5-dev zlib1g-dev zip libjpeg8-dev liblapack-dev libblas-dev gfortranInstall and upgrade pip3.$ sudo apt-get install python3-pip $ sudo pip3 install -U pip testresources setuptools==49.6.0 Install the Python package dependencies.$ sudo pip3 install -U numpy==1.19.4 future==0.18.2 mock==3.0.5 h5py==2.10.0 keras_preprocessing==1.1.1 keras_applications==1.0.8 gast==0.2.2 futures protobuf pybind112.Installing TensorFlowNote: As of the 20.02 TensorFlow release, the package name has changed from tensorflow-gpu to tensorflow. See the section on Upgrading TensorFlow for more information.Install TensorFlow using the pip3 command. This command will install the latest version of TensorFlow compatible with JetPack 4.5.$ sudo pip3 install --pre --extra-index-url https://developer.download.nvidia.com/compute/redist/jp/v45 tensorflowNote: TensorFlow version 2 was recently released and is not fully backward compatible with TensorFlow 1.x. If you would prefer to use a TensorFlow 1.x package, it can be installed by specifying the TensorFlow version to be less than 2, as in the following command:$ sudo pip3 install --pre --extra-index-url https://developer.download.nvidia.com/compute/redist/jp/v45 ‘tensorflow<2’If you want to install the latest version of TensorFlow supported by a particular version of JetPack, issue the following command:$ sudo pip3 install --extra-index-url https://developer.download.nvidia.com/compute/redist/jp/v$JP_VERSION tensorflowWhere:JP_VERSIONThe major and minor version of JetPack you are using, such as 42 for JetPack 4.2.2 or 33 for JetPack 3.3.1.If you want to install a specific version of TensorFlow, issue the following command:$ sudo pip3 install --extra-index-url https://developer.download.nvidia.com/compute/redist/jp/v$JP_VERSION tensorflow==$TF_VERSION+nv$NV_VERSIONWhere:JP_VERSIONThe major and minor version of JetPack you are using, such as 42 for JetPack 4.2.2 or 33 for JetPack 3.3.1.TF_VERSIONThe released version of TensorFlow, for example, 1.13.1.NV_VERSIONThe monthly NVIDIA container version of TensorFlow, for example, 19.01.Note: The version of TensorFlow you are trying to install must be supported by the version of JetPack you are using. Also, the package name may be different for older releases. See the TensorFlow For Jetson Platform Release Notes for a list of some recent TensorFlow releases with their corresponding package names, as well as NVIDIA container and JetPack compatibility.For example, to install TensorFlow 1.13.1 as of the 19.03 release, the command would look similar to the following:$ sudo pip3 install --extra-index-url https://developer.download.nvidia.com/compute/redist/jp/v42 tensorflow-gpu==1.13.1+nv19.3Tensorflow-GPU测试是否可用Tensorflow-gpu 1.x.x, 如Tensorflow-gpu 1.2.0, 可使用以下代码import tensorflow as tf tf.test.is_gpu_available()Tensoeflow-gpu 2.x.x,如Tensorflow-gpu 2.2.0, 可使用以下代码import tensorflow as tf tf.config.list_physical_devices('GPU')参考资料Installing TensorFlow For Jetson Platform:https://docs.nvidia.com/deeplearning/frameworks/install-tf-jetson-platform/index.htmlTensorflow-GPU测试是否可用:https://www.jianshu.com/p/8eb7e03a9163

Jetson nano 安装TensorFlow GPU Jetson nano 安装TensorFlow GPU1.Prerequisites and DependenciesBefore you install TensorFlow for Jetson, ensure you:Install JetPack on your Jetson device.Install system packages required by TensorFlow:$ sudo apt-get update $ sudo apt-get install libhdf5-serial-dev hdf5-tools libhdf5-dev zlib1g-dev zip libjpeg8-dev liblapack-dev libblas-dev gfortranInstall and upgrade pip3.$ sudo apt-get install python3-pip $ sudo pip3 install -U pip testresources setuptools==49.6.0 Install the Python package dependencies.$ sudo pip3 install -U numpy==1.19.4 future==0.18.2 mock==3.0.5 h5py==2.10.0 keras_preprocessing==1.1.1 keras_applications==1.0.8 gast==0.2.2 futures protobuf pybind112.Installing TensorFlowNote: As of the 20.02 TensorFlow release, the package name has changed from tensorflow-gpu to tensorflow. See the section on Upgrading TensorFlow for more information.Install TensorFlow using the pip3 command. This command will install the latest version of TensorFlow compatible with JetPack 4.5.$ sudo pip3 install --pre --extra-index-url https://developer.download.nvidia.com/compute/redist/jp/v45 tensorflowNote: TensorFlow version 2 was recently released and is not fully backward compatible with TensorFlow 1.x. If you would prefer to use a TensorFlow 1.x package, it can be installed by specifying the TensorFlow version to be less than 2, as in the following command:$ sudo pip3 install --pre --extra-index-url https://developer.download.nvidia.com/compute/redist/jp/v45 ‘tensorflow<2’If you want to install the latest version of TensorFlow supported by a particular version of JetPack, issue the following command:$ sudo pip3 install --extra-index-url https://developer.download.nvidia.com/compute/redist/jp/v$JP_VERSION tensorflowWhere:JP_VERSIONThe major and minor version of JetPack you are using, such as 42 for JetPack 4.2.2 or 33 for JetPack 3.3.1.If you want to install a specific version of TensorFlow, issue the following command:$ sudo pip3 install --extra-index-url https://developer.download.nvidia.com/compute/redist/jp/v$JP_VERSION tensorflow==$TF_VERSION+nv$NV_VERSIONWhere:JP_VERSIONThe major and minor version of JetPack you are using, such as 42 for JetPack 4.2.2 or 33 for JetPack 3.3.1.TF_VERSIONThe released version of TensorFlow, for example, 1.13.1.NV_VERSIONThe monthly NVIDIA container version of TensorFlow, for example, 19.01.Note: The version of TensorFlow you are trying to install must be supported by the version of JetPack you are using. Also, the package name may be different for older releases. See the TensorFlow For Jetson Platform Release Notes for a list of some recent TensorFlow releases with their corresponding package names, as well as NVIDIA container and JetPack compatibility.For example, to install TensorFlow 1.13.1 as of the 19.03 release, the command would look similar to the following:$ sudo pip3 install --extra-index-url https://developer.download.nvidia.com/compute/redist/jp/v42 tensorflow-gpu==1.13.1+nv19.3Tensorflow-GPU测试是否可用Tensorflow-gpu 1.x.x, 如Tensorflow-gpu 1.2.0, 可使用以下代码import tensorflow as tf tf.test.is_gpu_available()Tensoeflow-gpu 2.x.x,如Tensorflow-gpu 2.2.0, 可使用以下代码import tensorflow as tf tf.config.list_physical_devices('GPU')参考资料Installing TensorFlow For Jetson Platform:https://docs.nvidia.com/deeplearning/frameworks/install-tf-jetson-platform/index.htmlTensorflow-GPU测试是否可用:https://www.jianshu.com/p/8eb7e03a9163 -

边缘计算设备清单 1.jetson nano购买渠道及报价京东1-丽台京东自营旗舰店链接:https://item.jd.com/100007523969.html#crumb-wrap价格:859京东2-风火轮智能硬件专营店链接:https://item.jd.com/43596671885.html#none价格:899微雪电子链接:https://www.waveshare.net/shop/Jetson-Nano-Developer-Kit-B01.htm价格:782.50参数信息项目Jetson NanoAI算力472 GFLOPsGPU128-core MaxwellCPUQuad-core ARM A57 @ 1.43 GHz内存4 GB 64-bit LPDDR4 25.6 GB/s存储micro SD卡 (须另购,可选购:Micro SD Card 64GB)视频编码4K @ 30 or 4x 1080p @ 30 or 9x 720p @ 30 (H.264/H.265)视频解码4K @ 60 or 2x 4K @ 30 or 8x 1080p @ 30 or 18x 720p @ 30 (H.264/H.265)摄像头2x MIPI CSI-2 DPHY lanes联网千兆以太网,M.2 Key E接口外扩 (可外接: AC8265双模网卡 )显示HDMI 和 DP显示接口USB4x USB 3.0,USB 2.0 Micro-B扩展接口GPIO,I2C,I2S,SPI,UART其他260-pin 连接器功耗5W / 10W2.Jetson TX2购买渠道及报价京东1-中天晨拓数码专营店链接:https://item.jd.com/57288701121.html#crumb-wrap价格:4100京东2-风火轮智能硬件专营店链接:https://item.jd.com/42504341472.html#crumb-wrap价格:4100微雪电子链接:https://www.waveshare.net/shop/Jetson-TX2-Developer-Kit.htm价格:4189.50参数信息项目Jetson TX2AI算力1.3 TFLOPsGPU256-core NVIDIA Pascal™ GPUCPUDual-Core NVIDIA Denver 2 64-Bit CPU and Quad-Core ARM® Cortex®-A57 MPCore内存8GB 128-bit LPDDR4 Memory存储32GB eMMC 5.1视频视频编码:4K x 2K 60 Hz (HEVC) 视频解码:4K x 2K 60 Hz (12-bit support)网络千兆以太网,WIFI,蓝牙CSI12x CSI-2 D-PHY 1.2(Up to 30 GB/s)显示Two Multi-Mode DP 1.2 eDP 1.4 HDMI 2.0 Two 1x4 DSI (1.5Gbps/lane)PCIEGen 2 or 1x4 + 1x1 OR 2x1 + 1x2功耗7.5W / 15W3.Jetson Xavier NX购买渠道及报价京东-英伟达比格专卖店链接:https://item.jd.com/10023731874172.html价格:3899.00微雪电子链接:https://www.waveshare.net/shop/Jetson-Xavier-NX-Developer-Kit.htm价格:3675参数信息项目Jetson Xavier NXAI算力21 TFLOPsGPUNVIDIA Volta architecture with 384 NVIDIA CUDA cores and 48 Tensor coresCPU6-core NVIDIA Carmel ARM v8.2 64-bit CPU 6 MB L2 + 4 MB L3 6MB L2 + 4MB L3DL 加速器2x NVDLA Engines视觉加速器7-Way VLIW Vision Processor内存8 GB 128-bit LPDDR4x @ 51.2GB/s存储空间需另购 Micro SD视频编码2x 4K @ 30 or 6x 1080p @ 60 or 14x 1080p @ 30 (H.265/H.264)视频解码2x 4K @ 60 or 4x 4K @ 30 or 12x 1080p @ 60 or 32x 1080p @ 30 (H.265) 2x 4K @ 30 or 6x 1080p @ 60 or 16x 1080p @ 30 (H.264)摄像头2x MIPI CSI-2 DPHY lanes网络Gigabit Ethernet, M.2 Key E (WiFi/BT included), M.2 Key M (NVMe)显示接口HDMI and display portUSB4x USB 3.1, USB 2.0 Micro-B其它GPIO, I 2 C, I 2 S, SPI, UART规格尺寸103 x 90.5 x 34.66 mm功耗未给出4.Jetson AGX Xavier购买渠道及报价京东1-中天晨拓数码专营店链接:https://item.jd.com/35577062547.html价格:6658.00京东2-丽台京东自营旗舰店链接:https://item.jd.com/100007523939.html价格: 5799.00微雪电子链接:https://www.waveshare.net/shop/Jetson-AGX-Xavier-Developer-Kit.htm价格:5596.50参数信息项目Jetson AGX XavierAI算力32 TFLOPsGPU512 核 Volta GPU (具有 64 个 Tensor 核心) 11 TFLOPS (FP16) 22 TOPS (INT8)CPU8 核 ARM v8.2 64 位 CPU、8 MB L2 + 4MB L3内存32GB 256-Bit LPDDR4x or 137GB/s存储32GB eMMC 5.1DL加速器(2x) NVDLA 引擎 5 TFLOPS (FP16), 10 TOPS (INT8)视觉加速器7通道 VLIW 视觉处理器视频编解码(2x) 4Kp60 or HEVC/(2x) 4Kp60 or 12-Bit Support尺寸105 mm x 105 mm x 65 mm板载模块Jetson AGX Xavier功耗10W/15W/30W5.海康威视4k摄像头购买渠道及报价京东1-海康威视京东自营旗舰店链接:https://item.jd.com/35577062547.html价格:6186.海康威视1k摄像头购买渠道及报价京东1-海康威视京东自营旗舰店链接:https://item.jd.com/100008757357.html价格:286

边缘计算设备清单 1.jetson nano购买渠道及报价京东1-丽台京东自营旗舰店链接:https://item.jd.com/100007523969.html#crumb-wrap价格:859京东2-风火轮智能硬件专营店链接:https://item.jd.com/43596671885.html#none价格:899微雪电子链接:https://www.waveshare.net/shop/Jetson-Nano-Developer-Kit-B01.htm价格:782.50参数信息项目Jetson NanoAI算力472 GFLOPsGPU128-core MaxwellCPUQuad-core ARM A57 @ 1.43 GHz内存4 GB 64-bit LPDDR4 25.6 GB/s存储micro SD卡 (须另购,可选购:Micro SD Card 64GB)视频编码4K @ 30 or 4x 1080p @ 30 or 9x 720p @ 30 (H.264/H.265)视频解码4K @ 60 or 2x 4K @ 30 or 8x 1080p @ 30 or 18x 720p @ 30 (H.264/H.265)摄像头2x MIPI CSI-2 DPHY lanes联网千兆以太网,M.2 Key E接口外扩 (可外接: AC8265双模网卡 )显示HDMI 和 DP显示接口USB4x USB 3.0,USB 2.0 Micro-B扩展接口GPIO,I2C,I2S,SPI,UART其他260-pin 连接器功耗5W / 10W2.Jetson TX2购买渠道及报价京东1-中天晨拓数码专营店链接:https://item.jd.com/57288701121.html#crumb-wrap价格:4100京东2-风火轮智能硬件专营店链接:https://item.jd.com/42504341472.html#crumb-wrap价格:4100微雪电子链接:https://www.waveshare.net/shop/Jetson-TX2-Developer-Kit.htm价格:4189.50参数信息项目Jetson TX2AI算力1.3 TFLOPsGPU256-core NVIDIA Pascal™ GPUCPUDual-Core NVIDIA Denver 2 64-Bit CPU and Quad-Core ARM® Cortex®-A57 MPCore内存8GB 128-bit LPDDR4 Memory存储32GB eMMC 5.1视频视频编码:4K x 2K 60 Hz (HEVC) 视频解码:4K x 2K 60 Hz (12-bit support)网络千兆以太网,WIFI,蓝牙CSI12x CSI-2 D-PHY 1.2(Up to 30 GB/s)显示Two Multi-Mode DP 1.2 eDP 1.4 HDMI 2.0 Two 1x4 DSI (1.5Gbps/lane)PCIEGen 2 or 1x4 + 1x1 OR 2x1 + 1x2功耗7.5W / 15W3.Jetson Xavier NX购买渠道及报价京东-英伟达比格专卖店链接:https://item.jd.com/10023731874172.html价格:3899.00微雪电子链接:https://www.waveshare.net/shop/Jetson-Xavier-NX-Developer-Kit.htm价格:3675参数信息项目Jetson Xavier NXAI算力21 TFLOPsGPUNVIDIA Volta architecture with 384 NVIDIA CUDA cores and 48 Tensor coresCPU6-core NVIDIA Carmel ARM v8.2 64-bit CPU 6 MB L2 + 4 MB L3 6MB L2 + 4MB L3DL 加速器2x NVDLA Engines视觉加速器7-Way VLIW Vision Processor内存8 GB 128-bit LPDDR4x @ 51.2GB/s存储空间需另购 Micro SD视频编码2x 4K @ 30 or 6x 1080p @ 60 or 14x 1080p @ 30 (H.265/H.264)视频解码2x 4K @ 60 or 4x 4K @ 30 or 12x 1080p @ 60 or 32x 1080p @ 30 (H.265) 2x 4K @ 30 or 6x 1080p @ 60 or 16x 1080p @ 30 (H.264)摄像头2x MIPI CSI-2 DPHY lanes网络Gigabit Ethernet, M.2 Key E (WiFi/BT included), M.2 Key M (NVMe)显示接口HDMI and display portUSB4x USB 3.1, USB 2.0 Micro-B其它GPIO, I 2 C, I 2 S, SPI, UART规格尺寸103 x 90.5 x 34.66 mm功耗未给出4.Jetson AGX Xavier购买渠道及报价京东1-中天晨拓数码专营店链接:https://item.jd.com/35577062547.html价格:6658.00京东2-丽台京东自营旗舰店链接:https://item.jd.com/100007523939.html价格: 5799.00微雪电子链接:https://www.waveshare.net/shop/Jetson-AGX-Xavier-Developer-Kit.htm价格:5596.50参数信息项目Jetson AGX XavierAI算力32 TFLOPsGPU512 核 Volta GPU (具有 64 个 Tensor 核心) 11 TFLOPS (FP16) 22 TOPS (INT8)CPU8 核 ARM v8.2 64 位 CPU、8 MB L2 + 4MB L3内存32GB 256-Bit LPDDR4x or 137GB/s存储32GB eMMC 5.1DL加速器(2x) NVDLA 引擎 5 TFLOPS (FP16), 10 TOPS (INT8)视觉加速器7通道 VLIW 视觉处理器视频编解码(2x) 4Kp60 or HEVC/(2x) 4Kp60 or 12-Bit Support尺寸105 mm x 105 mm x 65 mm板载模块Jetson AGX Xavier功耗10W/15W/30W5.海康威视4k摄像头购买渠道及报价京东1-海康威视京东自营旗舰店链接:https://item.jd.com/35577062547.html价格:6186.海康威视1k摄像头购买渠道及报价京东1-海康威视京东自营旗舰店链接:https://item.jd.com/100008757357.html价格:286 -

Ubuntu的apt-get代理设置 Ubuntu的apt-get代理设置1. 环境变量方法设置环境变量,下面是临时设置export http_proxy=http://127.0.0.1:8000 sudo apt-get update2.设置apt-get的配置修改/etc/apt/apt.conf(或者/etc/envrionment),增加Acquire::http::proxy "http://127.0.0.1:8000/"; Acquire::ftp::proxy "ftp://127.0.0.1:8000/"; Acquire::https::proxy "https://127.0.0.1:8000/";3.在命令行临时带入这是我最喜欢的方法,毕竟apt不是时时刻刻都用的在命令行后面增加-o选项sudo apt-get -o Acquire::http::proxy="http://127.0.0.1:8000/" update

Ubuntu的apt-get代理设置 Ubuntu的apt-get代理设置1. 环境变量方法设置环境变量,下面是临时设置export http_proxy=http://127.0.0.1:8000 sudo apt-get update2.设置apt-get的配置修改/etc/apt/apt.conf(或者/etc/envrionment),增加Acquire::http::proxy "http://127.0.0.1:8000/"; Acquire::ftp::proxy "ftp://127.0.0.1:8000/"; Acquire::https::proxy "https://127.0.0.1:8000/";3.在命令行临时带入这是我最喜欢的方法,毕竟apt不是时时刻刻都用的在命令行后面增加-o选项sudo apt-get -o Acquire::http::proxy="http://127.0.0.1:8000/" update -

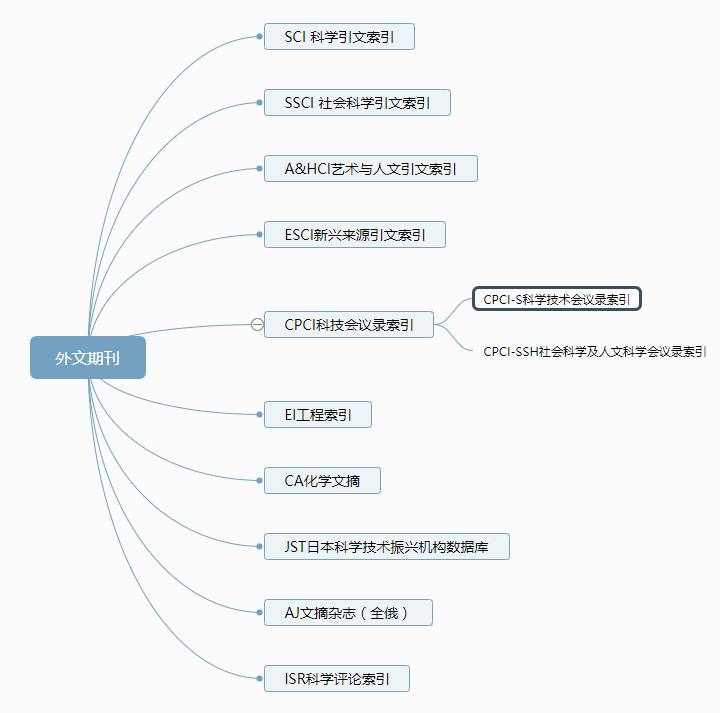

快速了解期刊分类 快速了解期刊分类外文期刊序号数据索引全称所属机构成立机构成立时间核心介绍1SCI科学引文索引Science Citation Index科睿唯安Clarivate Analytics美国科学情报研究所(ISI)1964①收录了自然科学、工程技术、生物医学等多各学科期刊②涵盖了各个研究领域最具影响力的超过9000多种核心学术期刊2SSCI社会科学引文索引Social Science Citation Index科睿唯安Clarivate Analytics美国科学情报研究所(ISI)1973内容覆盖包含人类学、法律、经济、历史、地理、心理学等55个领域期刊数量有约3500种3A&HCI艺术与人文科学引文索引Arts&Humanities Citation Index科睿唯安Clarivate Analytics美国科学情报研究所(ISI)1978是艺术与人文科学领域重要的期刊文摘索引数据库,收录考古学、建筑学、艺术、文学、哲学、宗教、历史等社会科学领域的1800余种期刊4ESCI新兴来源引文索引Emerging Sources Citations Index科睿唯安Clarivate Analytics美国科学情报研究所(ISI)2015收录了一批优质的新杂志进入观察期,帮助科研人员了解学术研究的新兴趋势,不定期更新5CPCI科技会议录索引Conference Proceedings Citation Index科睿唯安Clarivate Analytics美国科学情报研究所(ISI)1978①收录自1990年以来每年近10,000个国际科技学术会议所出版的会议论文②提供自1997年以来的会议录论文的摘要,每周更新6EI工程索引The Engineering Index爱思唯尔Elsevier Engineering Information Inc美国工程信息公司1884①全球最全面的工程领域二次文献数据库②涵盖一系列土木工程、建筑工程、交通运输、应用科学等领域高品质的文献资源7CA日本科学技术振兴机构数据库Japan Science&Technology Corportion美国化学学会化学文摘社美国化学学会1907①世界最大的化学文摘库②是目前世界上应用最为重要的化学、化工及相关学科的检索工具8JST日本科学技术振兴机构数据库Japan Science&Technology Corportion日本科学技术振兴机构日本科学技术振兴机构2007①是在日本《科学技术文献速报》的基础上发展起来的网络版②隶属于日本政府文部科学省,是日本最重要的科技信息机构9AJ文摘杂志Abstract Journal全俄科学技术情报研究所全俄科学技术情报研究所1953①供查阅自然科学、技术科学和工业经济为特色②为世界五大综合性文摘杂志之一10ISR科学评论索引Index to Scientific Reviews科睿唯安Clarivate Analytics美国科学情报研究所(ISI)1974收录世界各国2700余种科技期刊及300余种专著丛刊中有价值的评述论文中文期刊序号四大索引全称所属机构成立机构成立时间核心介绍1CSCD中国科学引文数据库Chinese Science Citation Database中国科学院文献情报中心(中国科学院图书馆)中国科学院1989①是我国第一个引文数据库,被誉为"中国的SCI" ②是ISI Web of Knowledge平台上第一个非英文语种的数据库2CSSCI中国社会科学引文索引Chinese Social Sciences Citation Index南京大学中国社会科学研究评价中心南京大学&香港科技大学1997①是国家、教育部重点课题攻关项目②是我国人文社会科学评价领域的标志性工程3北大核心中文核心期刊要目总览China National Knowledge Infrastructure北京大学出版社北京大学1992由北京大学图书馆及北京十几所高校图书馆众多期刊工作者及相关单位专家参加的研究项目4中信所核心中国科技论文统计源期刊中国科技信息研究所中国科技信息研究所1980受国家科技部委托,按照美国科学情报研究所(ISI)《期刊引证报告》(UCR)的模式,结合国内情况开发

快速了解期刊分类 快速了解期刊分类外文期刊序号数据索引全称所属机构成立机构成立时间核心介绍1SCI科学引文索引Science Citation Index科睿唯安Clarivate Analytics美国科学情报研究所(ISI)1964①收录了自然科学、工程技术、生物医学等多各学科期刊②涵盖了各个研究领域最具影响力的超过9000多种核心学术期刊2SSCI社会科学引文索引Social Science Citation Index科睿唯安Clarivate Analytics美国科学情报研究所(ISI)1973内容覆盖包含人类学、法律、经济、历史、地理、心理学等55个领域期刊数量有约3500种3A&HCI艺术与人文科学引文索引Arts&Humanities Citation Index科睿唯安Clarivate Analytics美国科学情报研究所(ISI)1978是艺术与人文科学领域重要的期刊文摘索引数据库,收录考古学、建筑学、艺术、文学、哲学、宗教、历史等社会科学领域的1800余种期刊4ESCI新兴来源引文索引Emerging Sources Citations Index科睿唯安Clarivate Analytics美国科学情报研究所(ISI)2015收录了一批优质的新杂志进入观察期,帮助科研人员了解学术研究的新兴趋势,不定期更新5CPCI科技会议录索引Conference Proceedings Citation Index科睿唯安Clarivate Analytics美国科学情报研究所(ISI)1978①收录自1990年以来每年近10,000个国际科技学术会议所出版的会议论文②提供自1997年以来的会议录论文的摘要,每周更新6EI工程索引The Engineering Index爱思唯尔Elsevier Engineering Information Inc美国工程信息公司1884①全球最全面的工程领域二次文献数据库②涵盖一系列土木工程、建筑工程、交通运输、应用科学等领域高品质的文献资源7CA日本科学技术振兴机构数据库Japan Science&Technology Corportion美国化学学会化学文摘社美国化学学会1907①世界最大的化学文摘库②是目前世界上应用最为重要的化学、化工及相关学科的检索工具8JST日本科学技术振兴机构数据库Japan Science&Technology Corportion日本科学技术振兴机构日本科学技术振兴机构2007①是在日本《科学技术文献速报》的基础上发展起来的网络版②隶属于日本政府文部科学省,是日本最重要的科技信息机构9AJ文摘杂志Abstract Journal全俄科学技术情报研究所全俄科学技术情报研究所1953①供查阅自然科学、技术科学和工业经济为特色②为世界五大综合性文摘杂志之一10ISR科学评论索引Index to Scientific Reviews科睿唯安Clarivate Analytics美国科学情报研究所(ISI)1974收录世界各国2700余种科技期刊及300余种专著丛刊中有价值的评述论文中文期刊序号四大索引全称所属机构成立机构成立时间核心介绍1CSCD中国科学引文数据库Chinese Science Citation Database中国科学院文献情报中心(中国科学院图书馆)中国科学院1989①是我国第一个引文数据库,被誉为"中国的SCI" ②是ISI Web of Knowledge平台上第一个非英文语种的数据库2CSSCI中国社会科学引文索引Chinese Social Sciences Citation Index南京大学中国社会科学研究评价中心南京大学&香港科技大学1997①是国家、教育部重点课题攻关项目②是我国人文社会科学评价领域的标志性工程3北大核心中文核心期刊要目总览China National Knowledge Infrastructure北京大学出版社北京大学1992由北京大学图书馆及北京十几所高校图书馆众多期刊工作者及相关单位专家参加的研究项目4中信所核心中国科技论文统计源期刊中国科技信息研究所中国科技信息研究所1980受国家科技部委托,按照美国科学情报研究所(ISI)《期刊引证报告》(UCR)的模式,结合国内情况开发 -